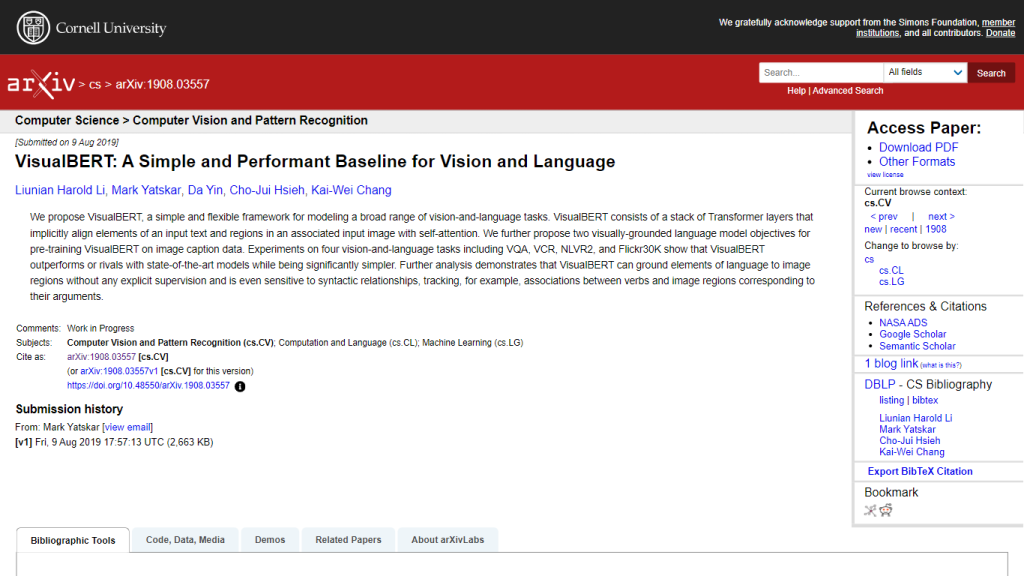

What is VisualBERT?

VisualBERT represents new AI in the field of combining vision and language. It relies on a stack of Transformer layers that encode deep representations from both textual and visual inputs. Large-scale image caption data pre-training with visually grounded language model objectives further enhance its ability to understand elements in an image and align them with their linguistic descriptions.

Key Features & Benefits by VisualBERT

Transformer Layer Architecture: Stacked Transformer layers for the implicit alignment of text and image regions.

Visually-Grounded Pre-training Objectives: Pretrain using image caption data in order to improve contextual understanding.

Performance on Vision-and-Language Tasks: Effective performances under VQA, VCR, NLVR2, and Flickr30K tasks.

Unsupervised Grounding Capability: Linguistic elements can be grounded into image regions without explicit supervision.

Sensitivity to Syntactic Relationships: It detects associations of language elements to components of an image—for example, verbs to image regions.

Using VisualBERT offers several advantages: deeper comprehension of elaborate visual and textual data, improved performance in various vision-and-text tasks, the ability to perform without explicit instruction input, which gives it great flexibility and efficiency.

Use Cases and Applications of VisualBERT

VisualBERT showcases remarkable competence in a variety of vision-and-text applications, including but not limited to the following:

- VQA: Visual Question Answering – The art of answering questions with regard to visual content.

- VCR: Visual Commonsense Reasoning – Understanding and reasoning about common sense scenarios in images.

- NLVR2: Natural Language Visual Reasoning for Real – Interpret and deduce visual scenes described in natural language.

- Flickr30K: Understanding and generating image captions on the Flickr30K dataset.

The sectors that VisualBERT can definitely contribute to greatly are health, education, and marketing. In health, this could be helpful in the analysis of medical images. It can provide associations of textual descriptions with visual data. In education, it could improve e-learning platforms by improving contextual understanding of the multimodal content. Marketing professionals can use it in the analysis and optimization of visual advertising content.

Using VisualBERT

The following steps are involved in using VisualBERT:

- Data Preparation: Ensure your dataset involves both visual and textual elements.

- Model Initialization: Load the pre-trained model for VisualBERT or fine-tune it in order to suit your particular task.

- Input Processing: Process inputs of both images and text to the model.

- Run Model: Run the model and get predictions or outputs.

- Interpreting Output: Interpret model outputs in the context of your requirements.

It goes without saying that this will involve some fine-tuning of VisualBERT on your dataset and task. The user interface is intuitive: the navigation, input data, and interpretation of results are well-thought-out.

How VisualBERT Works

VisualBERT uses a stack of Transformer layers and self-attention mechanisms to align textual and visual representations. That is pre-trained on the dataset of image captions, further enhancing its ability to ground elements of language onto relevant image regions. This process involves the following steps:

- Text and Image Encoding: Both textual and visual input are encoded into representations.

- Self-Attention Mechanism: Transformer layers use self-attention to encode dependencies within and across input text and images.

- Alignment and Grounding: It places textual elements into alignment with visual regions, grounding them in a manner not explicitly supervised.

- Generation: Make use of the representations from aligned and grounded to create the output of a task that one desires.

Pros and Cons of VisualBERT

Benefits

- High performance in a range of vision-and-language tasks.

- Allows grounding of language elements to images without explicit supervision.

- Effective at grasping the syntactic relationships in languages.

Possible Drawbacks

- Training and fine-tuning might be computationally very expensive.

- Accuracy may be dependent on the nature and quality of the training data.

User feedback indicates that VisualBERT carries out its tasks pretty well and it is able to handle challenging jobs in vision-and-language tasks, though users also report that substantial computational resources are required for the model to work at its best.

Conclusion about VisualBERT

Among others, VisualBERT is a very powerful AI model that effectively merges vision and language processing. Equipped with advanced features of unsupervised grounding, sensitive to syntactic relationships, among others, the model can have perfect responses to any given application across various instances. In as much as high demand in computational resources is required, this highly performing and adaptable model is invaluable in various industries so as to apply complex vision-and-language tasks through the use of AI.

Future work may be directed at further efficiency optimization and added functionality to make VisualBERT even more powerful for the fusion of visual and textual data.

VisualBERT Frequently Asked Questions

What is VisualBERT?

VisualBERT is a multi-purpose framework for modeling various vision-and-language activities. The model works with a stack of Transformer layers and self-attention mechanisms.

At which tasks does VisualBERT excel?

The tasks at which VisualBERT excels are VQA, VCR, NLVR2, and Flickr30K.

How does VisualBERT align language with image regions?

Within the self-attention of its transformer layers, VisualBERT aligns elements of text with associated image regions.

Can VisualBERT understand syntactic relationships in language?

Absolutely, VisualBERT can keep a tab of syntactic relationships that exist within the language. It can associate the verb with the region of the image, among others.

Does VisualBERT require explicit supervision to ground the language to images?

No, without any explicit supervision, VisualBERT can do the grounding for elements of language to image regions.