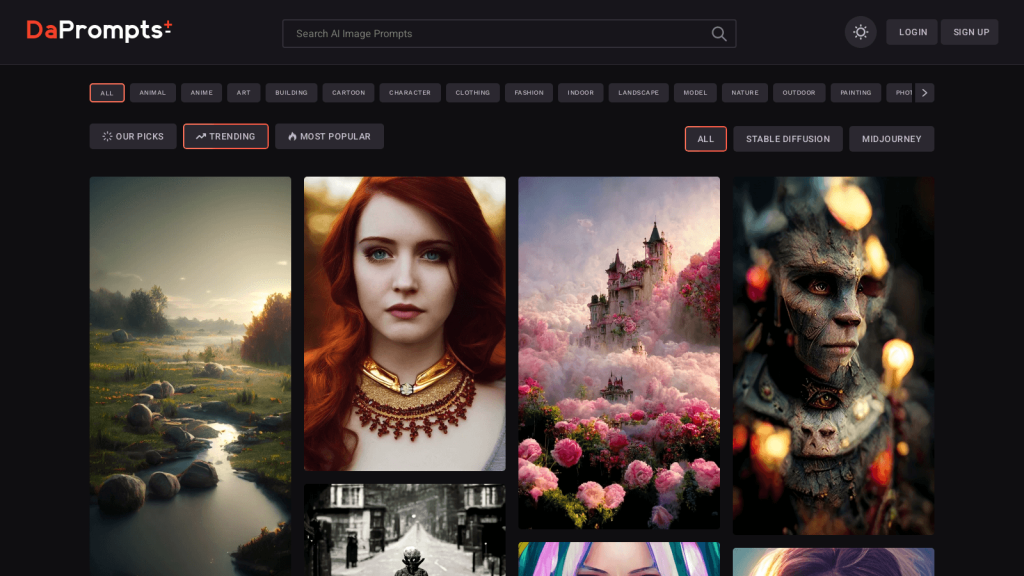

Discover DaPrompts: A Platform for AI-Generated Media

DaPrompts.com is a one-of-a-kind platform designed for creators to share, discover, and appreciate AI-generated media. Whether you’re an artist, writer, or content creator, DaPrompts offers a range of innovative tools and resources to help you unleash your creativity.

Stable Diffusion and Midjourney AI Art Models

One of the standout features of DaPrompts.com is its Stable Diffusion and Midjourney AI Art models. These models use advanced algorithms to generate stunning visuals that are sure to inspire your next project. Whether you’re looking for abstract designs or realistic imagery, these AI-generated art models have got you covered.

Innovative Prompts for Every Category

DaPrompts.com also offers a wide array of innovative prompts tailored to different categories. From sci-fi and fantasy to romance and horror, you’re sure to find a prompt that sparks your imagination. These prompts are designed to challenge and inspire you, helping you to push your creative boundaries and explore new ideas.

Real-World Use Cases

DaPrompts.com is an invaluable resource for anyone looking to create AI-generated media. Whether you’re a professional artist or a hobbyist, this platform offers a range of tools and resources to help you take your work to the next level. With its innovative prompts and advanced AI models, DaPrompts.com is a must-visit site for anyone looking to explore the cutting edge of creative technology.